RabbitMQ as the Inter-Module Backbone in Datahub

How RabbitMQ enables decentralized communication between modules

Series: Designing a Microservice-Friendly Datahub

PART III — CASE STUDY: MY CSL DATAHUB IMPLEMENTATION

Previous: The .NET Processor: Orchestration and Translation in Datahub

Next: End-to-End Data Flow Scenarios in Datahub

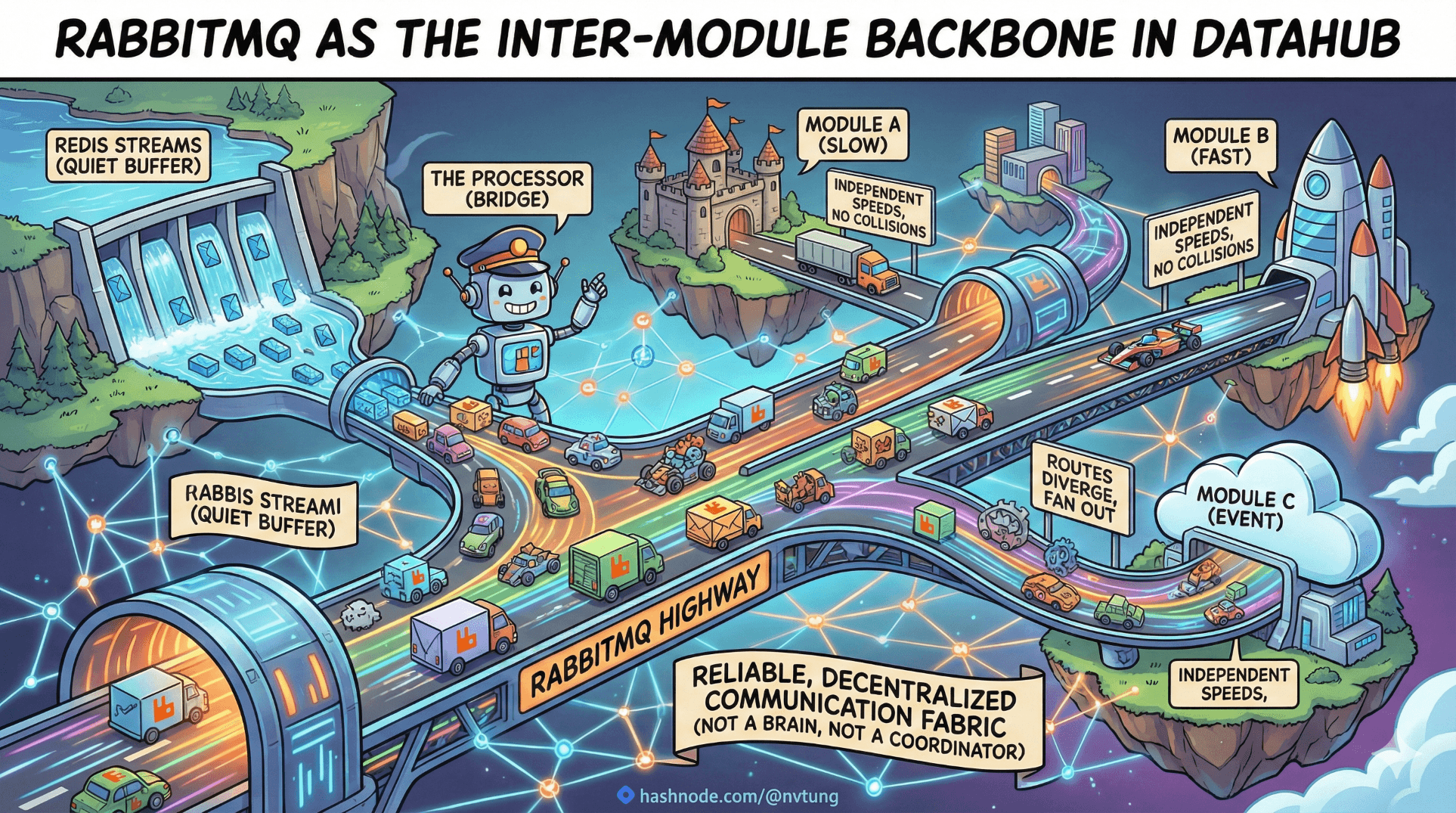

If Redis Streams are the quiet buffer and the Processor is the bridge, RabbitMQ is the highway—the place where events fan out, routes diverge, and independent modules move at their own speed without colliding.

This article explains how RabbitMQ functions as the inter-module backbone in the CSL Datahub: not as a coordinator, not as a brain, but as a reliable, decentralized communication fabric.

Disclaimer (Context & NDA)

The CSL Datahub implementation described here was designed and built in 2021. While the architectural principles remain valid, specific configurations or libraries could be updated today. To comply with NDA requirements, domain-specific payloads, schemas, and proprietary workflows are intentionally generalized.

Why RabbitMQ Sits at the Center (But Doesn’t Control)

RabbitMQ’s job in the CSL Datahub is deliberately narrow:

Accept published events

Route them based on declared rules

Deliver them reliably to consumers

It does not:

Enforce business meaning

Orchestrate workflows

Decide who should react

That restraint is what allows the system to scale.

RabbitMQ is logically central but behaviorally neutral.

Exchanges: Where Routing Decisions Live

In RabbitMQ, producers do not send messages to queues. They send messages to exchanges.

An exchange answers one question only:

“Given this message, which queues should receive it?”

In CSL, a topic exchange is commonly used:

channel.ExchangeDeclare(

exchange: "csl.events",

type: ExchangeType.Topic,

durable: true

);

Topic exchanges allow routing by pattern, which is essential for multi-module systems.

Routing Strategies: Meaningful Keys, Not Magic Strings

Routing keys encode what happened, not who should react.

Example routing keys:

user.updateduser.createdorder.completedprofile.*

Publishing looks like this:

channel.BasicPublish(

exchange: "csl.events",

routingKey: "user.updated",

body: Serialize(message)

);

The producer doesn’t know—or care—who is listening.

That ignorance is a feature.

Consumers in Other Modules: Opt-In, Not Coupled

Each external module declares its own queue and binds it to the exchange using patterns it cares about.

Example (Node.js):

channel.assertQueue('notifications.user', { durable: true });

channel.bindQueue(

'notifications.user',

'csl.events',

'user.*'

);

Another module might bind more narrowly:

channel.bindQueue(

'analytics.user',

'csl.events',

'user.updated'

);

No coordination required.

No shared code.

No shared database.

Each consumer opts in.

Publish/Subscribe in Practice

This is pub/sub without central planning:

One event is published

Many modules receive it

Each reacts independently

None block the others

If one consumer:

Is slow → its queue grows

Is down → messages wait

Is buggy → only it suffers

The rest of the system continues.

That isolation is the foundation of reliability.

Message Flow Patterns You Actually See in Production

RabbitMQ supports many patterns. In CSL, a few dominate.

1. Fan-Out (Broadcast)

One event, many consumers.

Used for:

State changes

Notifications

Cache invalidation

Read model updates

2. Selective Subscription

Consumers bind narrowly.

Used for:

Domain-specific reactions

Reduced noise

Ownership clarity

3. Asymmetric Load

Different consumers process at different speeds.

RabbitMQ absorbs this naturally via queues.

Decentralized Consumption Is the Point

No service knows:

How many consumers exist

Who owns which reactions

What downstream logic looks like

This avoids:

Release coordination

Dependency graphs

“Who broke whom?” debates

Decentralization is not chaos—it’s delegated responsibility.

A Note on Acknowledgements and Safety

Consumers explicitly acknowledge messages:

channel.consume(queue, msg => {

try {

handle(msg);

channel.ack(msg);

} catch (e) {

channel.nack(msg, false, false);

}

});

This enables:

At-least-once delivery

Retries via requeue or DLQ

Controlled failure handling

RabbitMQ does not assume success. Neither should you.

Why This Scales With Teams, Not Just Traffic

As organizations grow:

Teams want autonomy

Release cycles diverge

Responsibilities fragment

RabbitMQ supports this naturally:

Teams add consumers without permission

Teams remove consumers without coordination

Teams scale independently

The architecture scales organizationally, not just technically.

Common Pitfalls (And How CSL Avoids Them)

Overloading routing keys → keep them semantic, not procedural

Central “listener” services → let modules consume directly

Business logic in the broker → never

Synchronous dependencies → avoid at all costs

RabbitMQ is powerful—but only when kept simple.

Mental Model Recap

Think of RabbitMQ as:

A switchboard, not a controller

A router, not a decision-maker

A broadcaster, not a coordinator

When events enter RabbitMQ, ownership dissolves. Reactions belong to whoever chooses to listen.

Where We Go Next

Now that events are published, routed, and consumed across modules, the final step is to see the whole system in motion.

In the next article, End-to-End Data Flow Scenarios, we’ll trace real flows—from a user action in the CSL Web App, through Redis and RabbitMQ, to multiple reacting modules—so you can watch the Datahub work from start to finish.

Communication at scale isn’t about control.

It’s about letting the right conversations happen—everywhere, safely.