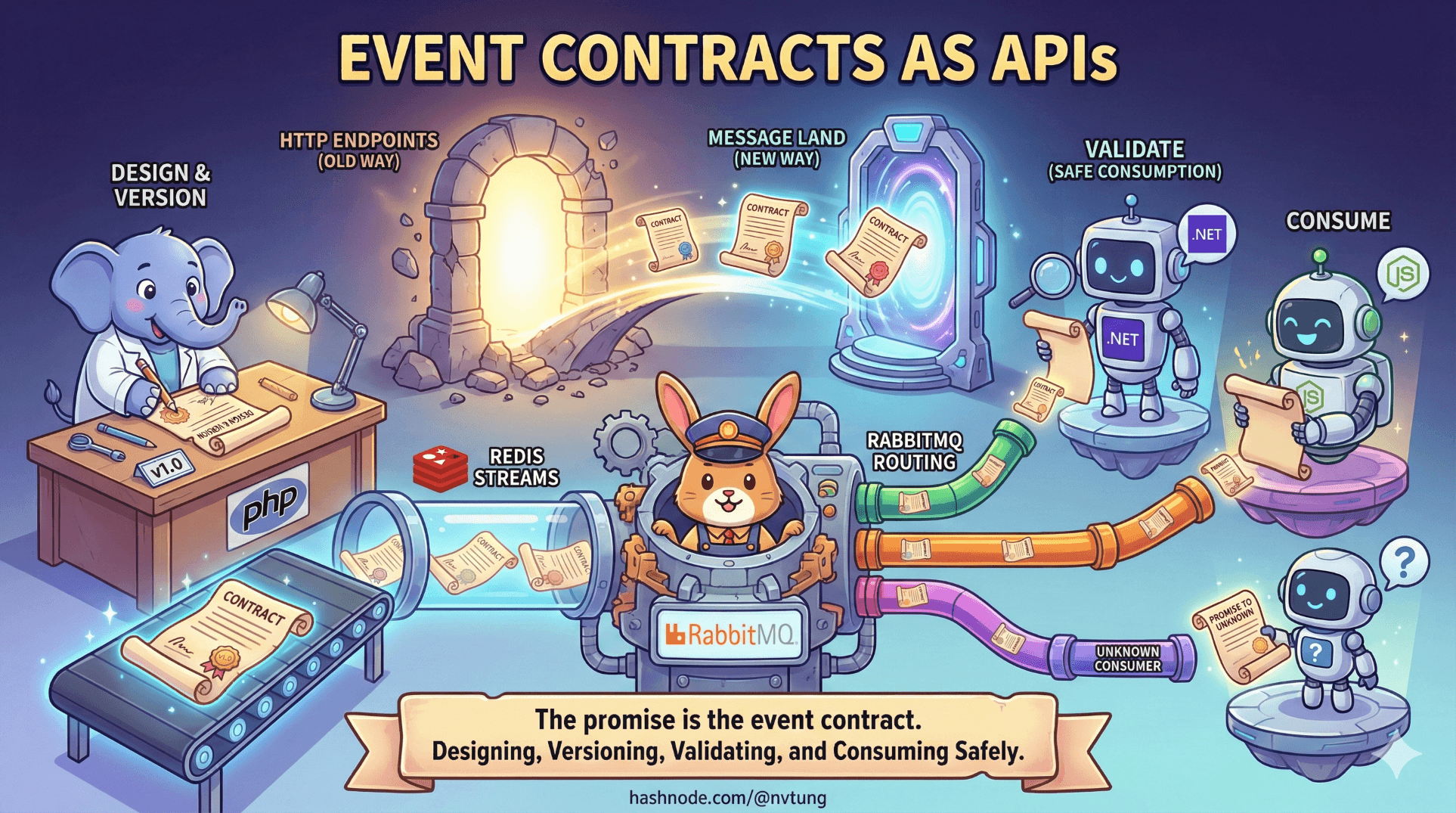

Event Contracts as APIs

Designing stable schemas for reliable event-driven systems

Series: Designing a Microservice-Friendly Datahub

In event-driven systems, APIs don’t disappear—they move. Instead of living behind HTTP endpoints, they live inside messages. Every event you publish becomes a promise to unknown consumers, running unknown versions of code, at unknown times.

That promise is the event contract.

This article treats event contracts as first-class APIs and shows—using practical examples with PHP, Redis Streams, .NET, RabbitMQ, and Node.js—how to design, version, validate, and consume them safely. These frameworks and tools stack closely mirrors my actual implemented Datahub.

What Is an Event Contract?

An event contract defines:

What happened (semantic meaning)

How it’s represented (schema)

What’s guaranteed (fields, types, invariants)

How it evolves (versioning & compatibility)

If REST APIs answer “What can you ask me to do?”, event contracts answer “What facts will I reliably tell you?”

Once an event leaves the producer, the contract becomes public.

A Minimal, Strong Event Envelope

Start with a boring, explicit envelope. Boring is good.

{

"event_type": "user.updated",

"event_version": 1,

"event_id": "9b6c8c2a-7c9d-4b7f-9a1e-1e1f9a5b3f6a",

"occurred_at": "2025-01-02T10:15:30Z",

"producer": "web-app",

"data": {

"user_id": 123,

"display_name": "Alice Nguyen"

}

}

Why this works:

event_type+event_version→ routing + compatibilityevent_id→ idempotencyoccurred_at→ temporal reasoningproducer→ ownershipdata→ the only part consumers interpret

Ownership: One Event, One Owner

Every event must have exactly one owner.

The owner:

Defines the schema

Controls versioning

Decides meaning

Consumers:

Interpret

React

Derive state

They do not negotiate schema changes. If multiple teams “co-own” an event, no one owns it—and evolution stalls.

Producing Events (PHP → Redis Streams)

Producers should emit events after state is committed. Keep emission simple.

$redis->xAdd(

'events',

'*',

[

'event_type' => 'user.updated',

'event_version' => 1,

'event_id' => uuid_create(UUID_TYPE_RANDOM),

'occurred_at' => gmdate('c'),

'producer' => 'web-app',

'data' => json_encode([

'user_id' => $userId,

'display_name' => $displayName

])

]

);

Notes:

No routing logic

No consumer awareness

No retries here

The producer’s job is to state facts, not orchestrate outcomes.

Versioning Rules (Non-Negotiable)

Rule 1: Never Change Meaning In Place

Changing semantics without a version bump is a lie.

Bad

Change “display_name” field to “full_name” value without any warning.

"display_name": "Alice" // suddenly means "full_name"

Good

Bump version first, add new field for new meaning.

{

"event_version": 2,

"data": { "full_name": "Alice Nguyen" }

}

Rule 2: Prefer Additive Changes

Add fields; don’t remove or change existing ones.

{

"event_version": 1,

"data": {

"user_id": 123,

"display_name": "Alice",

"avatar_url": null

}

}

Old consumers keep working. New consumers opt in.

Rule 3: Breaking Changes Require New Versions

Breaking changes demand:

New

event_versionParallel support during migration

Clear deprecation timelines

Validating at the Edge (Producer / Translator)

Fail fast before publishing.

.NET (schema gate before RabbitMQ publish)

if (!SchemaRegistry.IsValid("user.updated", 1, payload))

{

throw new InvalidOperationException("Invalid event contract");

}

Validation at the edge prevents malformed truth from spreading.

Publishing to RabbitMQ (Translator / Bridge)

Use routing keys that describe what happened, not who should react.

channel.ExchangeDeclare(

exchange: "events",

type: ExchangeType.Topic,

durable: true

);

channel.BasicPublish(

exchange: "events",

routingKey: "user.updated",

body: Serialize(payload)

);

Producers publish. Consumers opt in.

Consuming Defensively (Node.js)

Consumers should:

Accept only versions they support

Ignore unknown fields

Fail loudly on incompatible versions

Be idempotent

if (event.event_type !== "user.updated" || event.event_version !== 1) {

throw new Error("Unsupported event contract");

}

// idempotency

await db.query(

`INSERT INTO processed_events (event_id)

VALUES (?) ON DUPLICATE KEY UPDATE event_id = event_id`,

[event.event_id]

);

Silent acceptance of incompatible versions is how data corruption sneaks in.

Idempotency Depends on the Contract

At-least-once delivery means duplicates happen. Contracts make retries safe.

INSERT INTO processed_events (event_id)

VALUES (:event_id)

ON DUPLICATE KEY UPDATE event_id = event_id;

No stable event_id → retries become dangerous.

Contracts as Living Documentation

Treat schemas like code:

events/

user.updated/

v1.json

v2.json

order.completed/

v1.json

Benefits:

Shared vocabulary

Onboarding material

Reviewable changes

Fewer meetings

Schemas replace tribal knowledge.

Events vs REST APIs (Responsibility Check)

| Aspect | REST API | Event Contract |

| Direction | Request/Response | Broadcast |

| Coupling | Caller knows callee | Producer ignores consumers |

| Failure | Immediate | Deferred |

| Versioning | Endpoint-based | Schema-based |

| Testing | Integration tests | Contract tests |

Different mechanics. Same responsibility.

Common Anti-Patterns (Avoid These)

“We’ll just add this field—no version needed”

“Consumers can figure it out”

“Reuse one event for multiple meanings”

“We’ll document later”

All end the same way: silent drift, then painful rewrites.

Closing Thought

In event-driven systems, your schema is your handshake.

Make it:

Explicit

Versioned

Owned

Boring

Events don’t just carry data.

They carry trust.

And trust, once broken, is far harder to replay than any message stream.