Designing for Decoupling and Evolution

How to build systems that evolve without breaking

Series: Designing a Microservice-Friendly Datahub

PART II — DESIGN PRACTICES: THE “HOW”

Previous: Datahub Technology Choices: Tools That Fit the Pattern

Next: Datahub: Reliability, Failure, and Observability

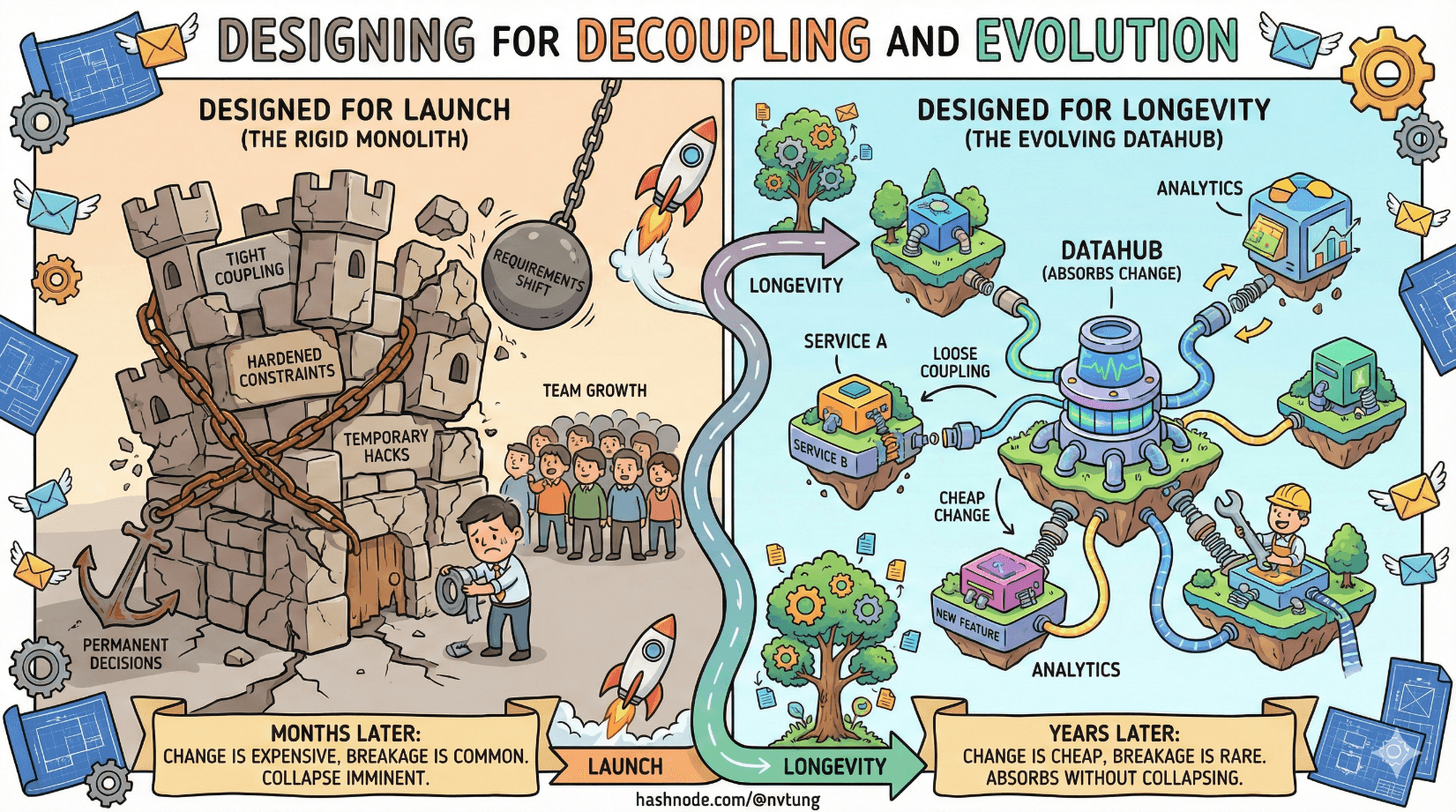

Most software architectures are designed for launch. Very few are designed for longevity.

The difference shows up months or years later, when requirements shift, teams grow, and yesterday’s “temporary” decisions harden into permanent constraints. Systems that survive this phase don’t do so because they predicted the future correctly—they survive because they made change cheap and breakage rare.

This article is about designing Datahub-based systems with that long view in mind. Not to prevent change—that’s impossible—but to absorb it without collapsing.

Start With the Only Certainty: Change

Features will change.

Data models will change.

Teams will change.

Interpretations will change.

What you can choose is where the cost of that change is paid.

Poorly designed systems push change costs outward:

onto consumers,

onto operations,

onto coordination meetings,

onto release freezes.

Well-designed systems localize change. They let evolution happen at the edges while the core remains stable.

That’s the heart of decoupling.

Avoiding Shared Databases: Ownership or Chaos

The fastest way to make change expensive is to let multiple services share the same database.

Shared databases:

Create invisible dependencies

Make schema changes political

Encourage shortcut integrations

Destroy ownership boundaries

They fail not because they’re technically unsound, but because they blur responsibility. When everyone depends on the same tables, no one can change them safely.

In a decoupled system:

Each service owns its data

Databases are private

Changes are announced as events

Consumers build their own derived views

This isn’t duplication—it’s intentional redundancy. Redundancy is what allows services to evolve independently.

Contract-Based Communication: Replace Assumptions With Agreements

Decoupling does not mean “anything goes.” It means explicit agreements instead of implicit assumptions.

In Datahub architectures, those agreements are contracts:

Event schemas

Message formats

API payloads

Semantic meaning

A contract answers three questions:

What data is being shared?

What does it mean?

What guarantees does the producer make?

Everything else is an implementation detail.

Loose coupling without contracts is chaos.

Tight coupling with contracts is still coupling.

Loose coupling with strong contracts is architecture.

Schemas Are APIs (Whether You Admit It or Not)

Teams often treat schemas as internal details and APIs as public interfaces. In event-driven systems, that distinction collapses.

An event schema is an API.

Once an event is published:

Someone will depend on it

Someone will parse it

Someone will store it

Someone will build logic around it

Changing a schema casually is equivalent to changing a public API without versioning. The damage just happens asynchronously.

If an event leaves your service boundary, it must be treated as a public contract.

Event Versioning: Designing for the Second Change

Most schemas aren’t broken by their first version. They’re broken by the second.

Event versioning exists because:

Fields get added

Meanings get refined

Edge cases emerge

Original assumptions fail

A versioned event might include:

A version field

A versioned topic or routing key

A new event type entirely

What matters is not the mechanism, but the discipline:

Never silently change meaning

Never break existing consumers

Never assume everyone upgrades at once

Backward Compatibility: The Unsung Hero

Backward compatibility is how decoupling survives reality.

In distributed systems:

Consumers deploy slower than producers

Some consumers are offline

Some consumers lag by weeks or months

If producers require consumers to update immediately, you’ve recreated tight coupling—just over a message broker.

Designing for backward compatibility means:

Add fields, don’t remove them

Make new data optional

Preserve old semantics

Let consumers opt in to new behavior

This isn’t generosity. It’s survival.

Consumers Move Slower Than Producers

This is one of the most painful lessons teams learn.

Producers are usually:

Actively developed

Owned by motivated teams

Close to the change

Consumers are often:

Numerous

Distributed

Owned by other teams

Less visible

A producer-centric mindset breaks systems. A consumer-aware mindset scales them.

Design like your slowest consumer matters—because it does.

Change Is Guaranteed; Breakage Is Optional

Breakage is a design choice.

It happens when:

Contracts are vague

Schemas mutate silently

Ownership is unclear

Upgrades are forced

Decoupled systems accept that:

Change will happen

Not everyone will change together

Time is part of correctness

They optimize for graceful evolution, not immediate alignment.

Long-Term Thinking: Designing for Years, Not Releases

Short-term design asks:

- “How do we ship this feature?”

Long-term design asks:

- “How will this still work after 20 changes?”

Datahub architectures reward the second mindset. They:

Encourage clear ownership

Penalize shortcuts

Expose hidden coupling early

They are not the fastest way to build something once.

They are the safest way to build something that lasts.

What This Enables

When decoupling and evolution are designed in from the start:

Teams move independently

Failures stay local

Rollbacks are possible

History remains interpretable

Most importantly, systems become boring in the best possible way. Change stops being dramatic. It becomes routine.

Where We Go Next

At this point in the series, the architecture is sound—but systems don’t fail only in design. They fail in operation.

In the next article, we’ll shift focus to reliability, failure, and observability—what happens when messages pile up, consumers lag, and reality pushes back.

Good architecture doesn’t prevent change.

It survives it.