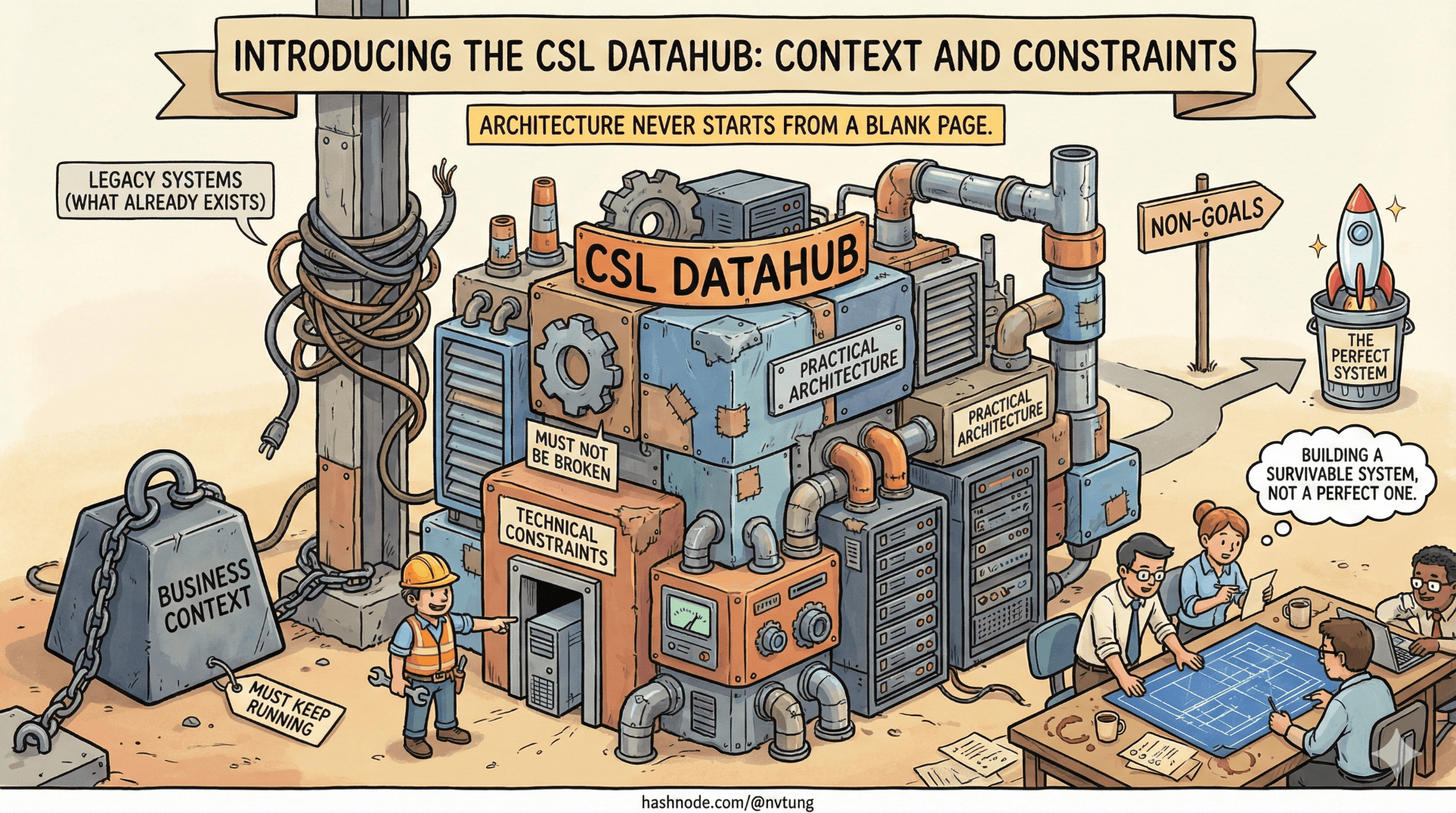

Introducing my CSL Datahub implementation: Context and Constraints

How real-world constraints shaped a practical Datahub architecture

Series: Designing a Microservice-Friendly Datahub

PART III — CASE STUDY: MY CSL DATAHUB IMPLEMENTATION

Previous: Scaling Patterns and Anti-Patterns

Next: High-Level Architecture Overview of my CSL Datahub implementation

Architecture never starts from a blank page. It starts from what already exists, what must keep running, and what cannot be broken. This article introduces the Datahub implementation of my own called CSL by grounding it in reality: the business context, the technical constraints, and the deliberate non-goals that shaped every architectural decision.

This is not a story about building the perfect system. It’s about building a survivable one.

Disclaimer (Context & NDA):

The CSL Datahub implementation described in this case study was designed and built in 2021. While the architectural principles remain relevant, some technology choices could be updated today. To comply with NDA requirements, domain-specific business logic, sensitive data models, and proprietary workflows are intentionally generalized.

Business Context: One System, Many Responsibilities

The CSL system began life as a traditional web application—centralized, feature-rich, and business-critical. Over time, it accumulated responsibilities that extended far beyond its original scope:

User-facing workflows

Background processing

Integration with multiple internal modules

Data synchronization across systems

Scheduled jobs and batch operations

As the organization grew, so did the number of modules depending on CSL data. Different teams needed the same information for different purposes, at different speeds, and under different failure tolerances.

What was once a single application became a shared backbone—without the architecture to support that role.

Why Microservices Were Needed (Eventually)

This wasn’t a microservices-first decision. It was a pressure-driven one.

Symptoms appeared gradually:

New features required touching unrelated parts of the codebase

Integrations multiplied point-to-point

Deployment risk increased

Changes in one module broke others unexpectedly

The real problem wasn’t scale in traffic—it was scale in coordination.

Different teams needed:

Independent release cycles

Clear ownership boundaries

The ability to react to data changes without direct dependencies

Microservices were not introduced to replace the CSL app, but to orbit it—extracting responsibilities while allowing the core system to remain stable.

The Biggest Constraint: A Legacy PHP Application

At the center of everything sat a legacy PHP web application built on Yii (Humhub). It was:

Business-critical

Actively used

Not easily replaceable

Not designed for modern message brokers

Rewriting it was not an option. Pausing development was not an option. Forcing it to behave like a microservice was also not realistic.

So the architecture had to work with the system as it was, not as we wished it to be.

Key implications:

Direct RabbitMQ integration inside the PHP app was avoided

The app remained the source of truth

Communication outward had to be low-risk and minimal

Multiple Modules, Multiple Languages

Around the CSL app grew a set of independent modules:

Some built in .NET

Some built in JavaScript/Node.js

Some designed for batch processing

Some designed for real-time reactions

A single-language stack was impossible.

This ruled out:

Shared codebases

Shared ORM layers

Shared databases

Instead, the system needed language-neutral contracts and communication patterns that worked everywhere.

Events and HTTP fit that requirement naturally.

Introducing the Processor: A Bridge, Not a Brain

One of the most important design decisions was introducing a dedicated Processor service, implemented in .NET.

Its role was explicit and limited:

Consume events

Call CSL APIs when needed

Publish messages to other modules

Translate between Redis, RabbitMQ, and REST

It was not allowed to:

Become a business decision engine

Replace domain logic

Own authoritative state

This kept complexity away from the legacy app without centralizing control.

Why Redis Streams Appeared in the Design

The CSL app needed a way to announce changes without:

Blocking user requests

Introducing new failure modes

Depending directly on external systems

Redis was already part of the infrastructure. Redis Streams provided:

Fast, append-only event buffering

Consumer groups

Minimal operational overhead

Inside the PHP app, publishing an event looked like this:

$redis->xAdd(

'csl:events',

'*',

[

'type' => 'user.updated',

'user_id' => $userId,

'updated_at' => time()

]

);

This was intentionally simple:

No routing logic

No consumer awareness

No delivery guarantees beyond “don’t lose it immediately”

Redis absorbed bursts. The Processor handled the rest.

Consuming and Translating Events in the Processor

On the .NET side, the Processor consumed Redis Streams and forwarded meaningful events into RabbitMQ:

while (true)

{

var entries = redis.StreamReadGroup(

"csl-group",

"processor-1",

"csl:events",

">");

foreach (var entry in entries)

{

PublishToRabbitMQ(entry);

redis.StreamAcknowledge("csl:events", "csl-group", entry.Id);

}

}

This separation achieved several things:

The PHP app stayed simple

Failures were isolated in the Processor

Retry and DLQ strategies lived outside the legacy system

RabbitMQ as the Inter-Module Backbone

RabbitMQ was chosen not for hype, but for fit:

Clear routing semantics

Strong delivery guarantees

Mature tooling

Good multi-language support

Publishing from the Processor looked like:

channel.BasicPublish(

exchange: "csl.events",

routingKey: "user.updated",

body: messageBody

);

Other modules subscribed independently, without knowing—or caring—about CSL internals.

Explicit Non-Goals (Equally Important)

Several things were deliberately not attempted:

No full event sourcing

No rewrite of the CSL app

No synchronous orchestration across modules

No “single system to rule them all”

These non-goals protected the project from ambition overload.

Architecture succeeds as much by what it refuses to do as by what it attempts.

Architecture Is Shaped by Constraints

Every part of this design traces back to a constraint:

Legacy code → minimal intrusion

Multiple teams → loose coupling

Language diversity → message-based integration

Business continuity → incremental evolution

This is why the CSL Datahub looks the way it does. Not because it’s theoretically optimal—but because it was practically survivable.

Perfect architectures don’t exist. Context does.

Where We Go Next

Now that the context and constraints are clear, we can finally look at the system itself—without abstraction.

In the next article, High-Level Architecture Overview, we’ll walk through the complete CSL Datahub design, component by component, and trace how data actually flows through the system from end to end.

Understanding the why makes the what finally make sense.