Redis Streams vs Kafka: Choosing the Right Event Backbone

Comparing buffering and logging backbones in event-driven systems

Series: Designing a Microservice-Friendly Datahub

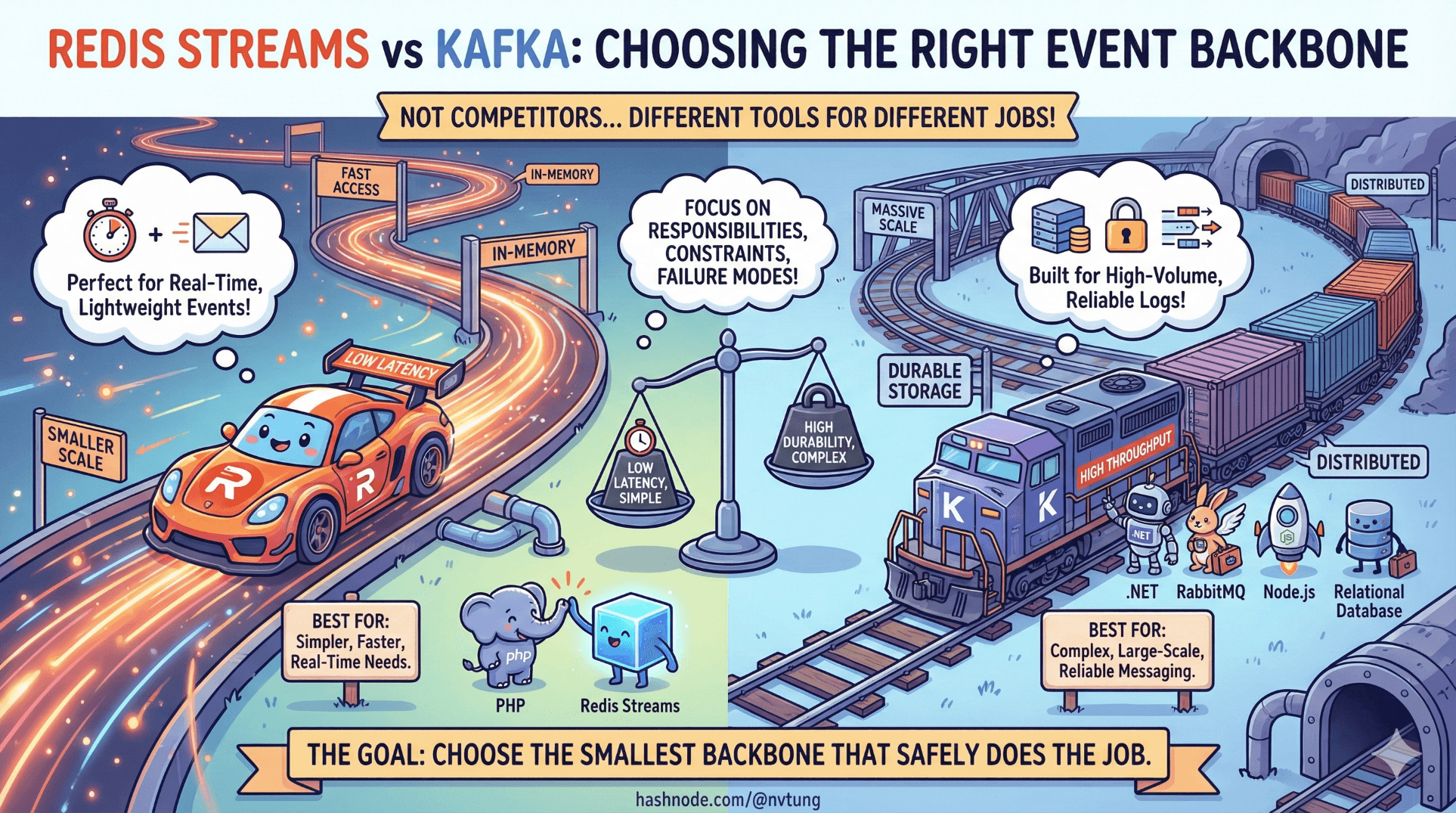

Choosing an event backbone is one of the most consequential decisions in an event-driven system—and also one of the most commonly misunderstood. Too often, the discussion starts with brand names instead of responsibilities, constraints, and failure modes.

Redis Streams and Kafka are not competitors in the abstract. They solve different problems, at different scales, with very different trade-offs. This article compares them using concrete examples and the same toolchain used throughout this series: PHP, Redis Streams, .NET, RabbitMQ, Node.js, and relational databases.

The goal is not to crown a winner.

The goal is to choose the smallest backbone that safely does the job.

Start With the Real Question (Not the Tool)

Before naming Redis or Kafka, ask this:

Do I need event propagation, or do I need event history?

That single question determines almost everything that follows.

Mental Models: Buffer vs Log

Redis Streams: A Time Buffer

Redis Streams behave like a durable, ordered buffer.

They are designed to:

Absorb bursts

Decouple producers and consumers

Allow consumer lag

Enable safe retries

They are not designed to be a long-term source of truth.

Think of Redis Streams as:

“Hold this until someone processes it.”

Kafka: A Distributed Event Log

Kafka behaves like a durable, replayable log.

It is designed to:

Retain events for days, weeks, or months

Allow consumers to replay from any offset

Treat events as data

Support stream processing

Think of Kafka as:

“This is the data.”

Architecture Implications (This Is the Real Cost)

Redis Streams Architecture

Producer → Redis Streams → Consumer Group → Processing → Ack

Simple

Few moving parts

Low operational overhead

Clear backpressure signals

Kafka Architecture

Producer → Kafka Broker Cluster → Topic → Partition → Consumer Group → Offset Commit

High throughput

Strong ordering per partition

Operationally heavy

Requires careful tuning and expertise

Kafka earns its complexity only when you use its strengths.

Producing Events: PHP Example

Redis Streams (PHP)

$redis->xAdd(

'events',

'*',

[

'event_type' => 'user.updated',

'event_id' => uuid_create(UUID_TYPE_RANDOM),

'occurred_at' => gmdate('c'),

'data' => json_encode([

'user_id' => 123,

'display_name' => 'Alice'

])

]

);

One line

No schema registry

No partitions

No brokers to manage

This matters in legacy or core systems.

Kafka (Conceptual PHP via REST proxy)

$payload = [

'records' => [[

'value' => [

'event_type' => 'user.updated',

'event_id' => $uuid,

'occurred_at' => gmdate('c'),

'data' => [...]

]

]]

];

http_post('/topics/user-events', json_encode($payload));

Kafka production is never “just code”.

It’s code + infrastructure + governance.

Consumption Model: The Heart of the Difference

Redis Streams Consumer Group (.NET)

var entries = redis.StreamReadGroup(

"user-group",

"consumer-1",

"events",

">"

);

foreach (var entry in entries)

{

Process(entry);

redis.StreamAcknowledge("events", "user-group", entry.Id);

}

Key properties:

At-least-once delivery

Explicit ack

Pending entries visible

Backpressure is obvious

Redis forces you to think operationally.

Kafka Consumer (.NET, simplified)

consumer.Subscribe("user-events");

while (true)

{

var cr = consumer.Consume();

Process(cr.Message.Value);

consumer.Commit(cr);

}

Key properties:

Offset-based consumption

Replayable history

Ordering per partition

Commit semantics matter deeply

Kafka forces you to think in streams, not messages.

Backpressure: Where Systems Break First

Redis Streams

Backpressure shows up as:

Stream length growth

Pending entry growth

Consumer idle time

This is visible pressure.

Nothing crashes. Nothing blocks producers.

Latency increases instead of failure.

Kafka

Backpressure shows up as:

Consumer lag

Disk pressure

Broker I/O saturation

This is infrastructure pressure.

You must monitor and scale proactively.

Retention and Replay

This is Kafka’s strongest argument.

Kafka:

Replay from any offset

Rebuild state

Support event sourcing

Power stream processing

Redis Streams:

Replay is limited

Retention is bounded

History is not the product

If your system needs:

Auditing

Analytics

Historical reconstruction

Kafka starts to make sense.

If not, Kafka is usually overkill.

Operational Reality (The Part Blog Posts Skip)

Redis Streams Operational Profile

Single dependency (Redis)

Familiar tooling

Simple failure modes

Easy to reason about

Kafka Operational Profile

Broker clusters

ZooKeeper / KRaft

Capacity planning

On-call expertise

Schema governance

Kafka is powerful—but it is not casual infrastructure.

When Redis Streams Is the Right Choice

Redis Streams shines when:

Events are transient

You want buffering, not history

You already operate Redis

Latency matters

You value simplicity

The core system must stay protected

Redis is often the correct first backbone.

When Kafka Earns Its Complexity

Kafka earns its place when:

Events are data

Replay is mandatory

Throughput is extreme

Multiple consumers need independent history

Stream processing is required

Kafka is not “future-proofing” by default.

It’s a commitment.

A Common and Valid Hybrid Pattern

Many mature systems use both:

Redis Streams → buffer & protect core systems

Kafka → long-term log & analytics backbone

RabbitMQ → routing and fan-out

Each tool does one job well.

This is not redundancy.

This is separation of concerns.

The Most Dangerous Mistake

The most dangerous mistake is choosing Kafka because:

“We might need it later”

“It’s industry standard”

“It feels more scalable”

Premature Kafka adoption often:

Slows teams down

Hides architectural problems

Adds failure modes early

Consumes operational energy

Scale problems should earn their solutions.

Decision Cheat Sheet

| Requirement | Redis Streams | Kafka |

| Burst buffering | ✅ | ⚠️ |

| Backpressure visibility | ✅ | ⚠️ |

| Event replay | ⚠️ | ✅ |

| Operational simplicity | ✅ | ❌ |

| Event sourcing | ❌ | ✅ |

| Protecting legacy core | ✅ | ⚠️ |

Closing Thought

Event backbones are not trophies.

They are load-bearing structures.

Choose the one that:

Matches today’s reality

Fails predictably

Keeps teams moving

Leaves room to evolve

Redis Streams is not a “lightweight Kafka”.

Kafka is not a “better Redis”.

They are tools for different moments in a system’s life.

The best architecture is the one that lets you grow without fear, not the one that impresses on day one.