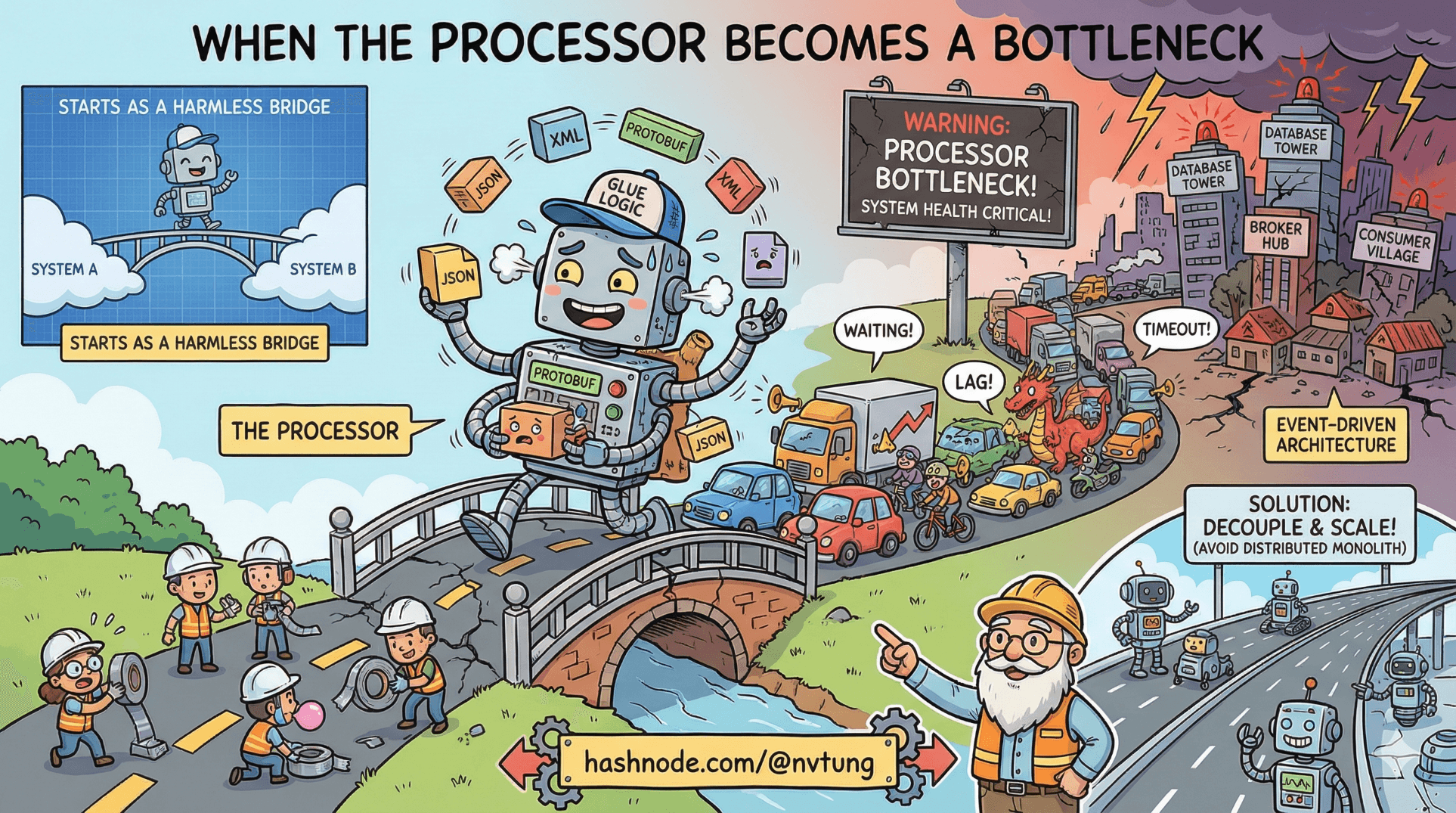

When the Processor Becomes a Bottleneck

How central processors quietly turn into system bottlenecks

Series: Designing a Microservice-Friendly Datahub

In many event-driven architectures, there is a service that looks harmless on paper but carries more risk than any database or broker.

It’s the Processor.

The Processor often starts life as a convenience: a bridge between systems, a translator between protocols, a place to put “just a bit of glue logic.” Over time, traffic grows, responsibilities accumulate, and suddenly the entire system’s health depends on whether this one service can keep up.

This article is about what happens when the Processor becomes a bottleneck—how to recognize it early, why it happens almost inevitably, and how to fix it without turning your architecture into a distributed monolith.

Why the Processor Is So Vulnerable

Processors sit at a dangerous intersection:

Upstream systems trust them to move data forward

Downstream systems depend on them for coordination

They often speak multiple protocols (Redis, RabbitMQ, HTTP)

They are expected to “just work” under load

That combination creates gravity.

Every shortcut, every “quick fix,” every cross-domain decision added here makes the Processor more central—and more fragile.

The First Signs of Trouble (Architectural Smells)

Processor bottlenecks rarely announce themselves clearly. They whisper first.

Common early signals:

Redis Streams pending entries growing steadily

RabbitMQ queues never draining

CPU usage uneven across processor instances

One handler dominating execution time

“Just scale it vertically” becoming the default response

At this stage, the problem is architecture, not performance tuning.

Smell #1: Too Many Responsibilities

The Processor often starts doing things it shouldn’t:

Applying business rules

Coordinating multi-step workflows

Making synchronous API calls to many services

Mutating state it doesn’t own

Example smell (pseudo-.NET):

if (event.Type == "order.created")

{

ValidateOrder(event);

ReserveInventory(event);

ChargePayment(event);

NotifyUser(event);

}

This looks efficient.

It is actually centralized orchestration disguised as event handling.

Smell #2: Synchronous Calls Inside Async Flows

Nothing kills throughput faster than blocking inside an event pipeline.

Example:

await httpClient.PostAsync("/inventory/reserve", payload);

await httpClient.PostAsync("/billing/charge", payload);

Now:

One slow service blocks the Processor

Retries amplify load

Backpressure moves upstream

Event lag explodes

Async architecture with sync behavior is worse than fully synchronous systems—it hides failure.

Smell #3: “One Processor to Rule Them All”

If one Processor handles:

Redis → RabbitMQ

RabbitMQ → API

External integrations

Batch jobs

Reconciliation logic

…then it is no longer a bridge.

It is a God service with a queue.

This is the most common failure mode.

Diagnosing the Bottleneck (Practically)

Redis Streams: Look at Pending Entries

XPENDING events processor-group

If:

Pending count keeps increasing

Oldest idle time grows

Messages are stuck to one consumer

Your Processor is not keeping up.

RabbitMQ: Look at Unacked Messages

Key indicators:

High unacked count

Low ack rate

Consumers “connected” but not progressing

This means processing—not routing—is the problem.

Why Scaling the Processor Often Fails

“Just add more instances” works only if:

Handlers are stateless

Work is evenly distributed

No shared locks or ordering constraints exist

External calls don’t dominate runtime

If any of these are false, horizontal scaling stalls quickly.

At that point, scaling exposes architectural coupling rather than fixing it.

The Right Fixes (In Order of Effectiveness)

1. Split by Responsibility, Not by Load

Instead of one Processor, use multiple narrow processors.

Bad split:

Processor A

Processor B (identical)

Good split:

Event Translator (Redis → RabbitMQ)

API Caller Processor

External Integration Processor

Each has:

One input

One responsibility

One scaling axis

2. Push Meaning to Consumers

The Processor should translate, not interpret.

Bad:

if (event.Status == "VIP")

{

ApplySpecialRules();

}

Good:

Publish("user.updated", event);

Let consumers decide what “VIP” means.

3. Replace Sync Calls With Events

Instead of:

await httpClient.PostAsync("/billing/charge", payload);

Emit:

Publish("payment.requested", payload);

Now:

The Processor moves on

Billing scales independently

Failure is isolated

Retries are safe

4. Introduce Internal Queues

If some work is slow but unavoidable, isolate it.

Example:

Fast Processor → publishes to

slow.workSlow Worker consumes at its own pace

This turns blocking into buffering.

A Concrete Refactor Example

Before (Monolithic Processor)

Handle(event)

{

Validate(event);

CallApiA(event);

CallApiB(event);

PublishResult(event);

}

After (Decoupled)

Handle(event)

{

Publish("event.received", event);

}

Then:

Worker A handles validation

Worker B handles API A

Worker C handles API B

Each scales independently

Latency increases slightly.

Throughput and reliability increase dramatically.

The Hard Truth: Processors Want to Become Monoliths

Processors accumulate logic because:

They feel “central”

They see all events

They’re convenient to modify

They’re already deployed

Resisting that pull requires discipline, not tools.

A good Processor is boring.

A clever Processor is a future outage.

When a Processor Is Still the Right Tool

Processors are valuable when they:

Translate protocols

Normalize schemas

Enforce contracts

Handle retries and DLQs

Absorb infrastructure complexity

They are dangerous when they:

Decide business outcomes

Coordinate workflows

Own domain rules

Become required for every feature

A Simple Litmus Test

Ask this question:

“If this Processor is down, can the rest of the system still make progress?”

If the answer is “no,” the Processor is too important.

Closing Thought

Processors don’t usually fail because they’re slow.

They fail because they’re asked to be too smart.

Event-driven systems scale by distributing meaning, not centralizing it.

When the Processor becomes a bottleneck, it’s not telling you to optimize—it’s telling you to give responsibility back to the edges.

That’s not a performance fix.

That’s architectural maturity.