Why Containerized Local Development Exists

Understanding the real problems Docker was built to solve.

Series: Containers, Actually: Building Real Local Dev Environments

ACT I — Foundations: Containerized Local Development

Previous: Introduction to Containers, Actually: Building Real Local Dev Environments

Next: What a Modern Full-Stack App Really Is

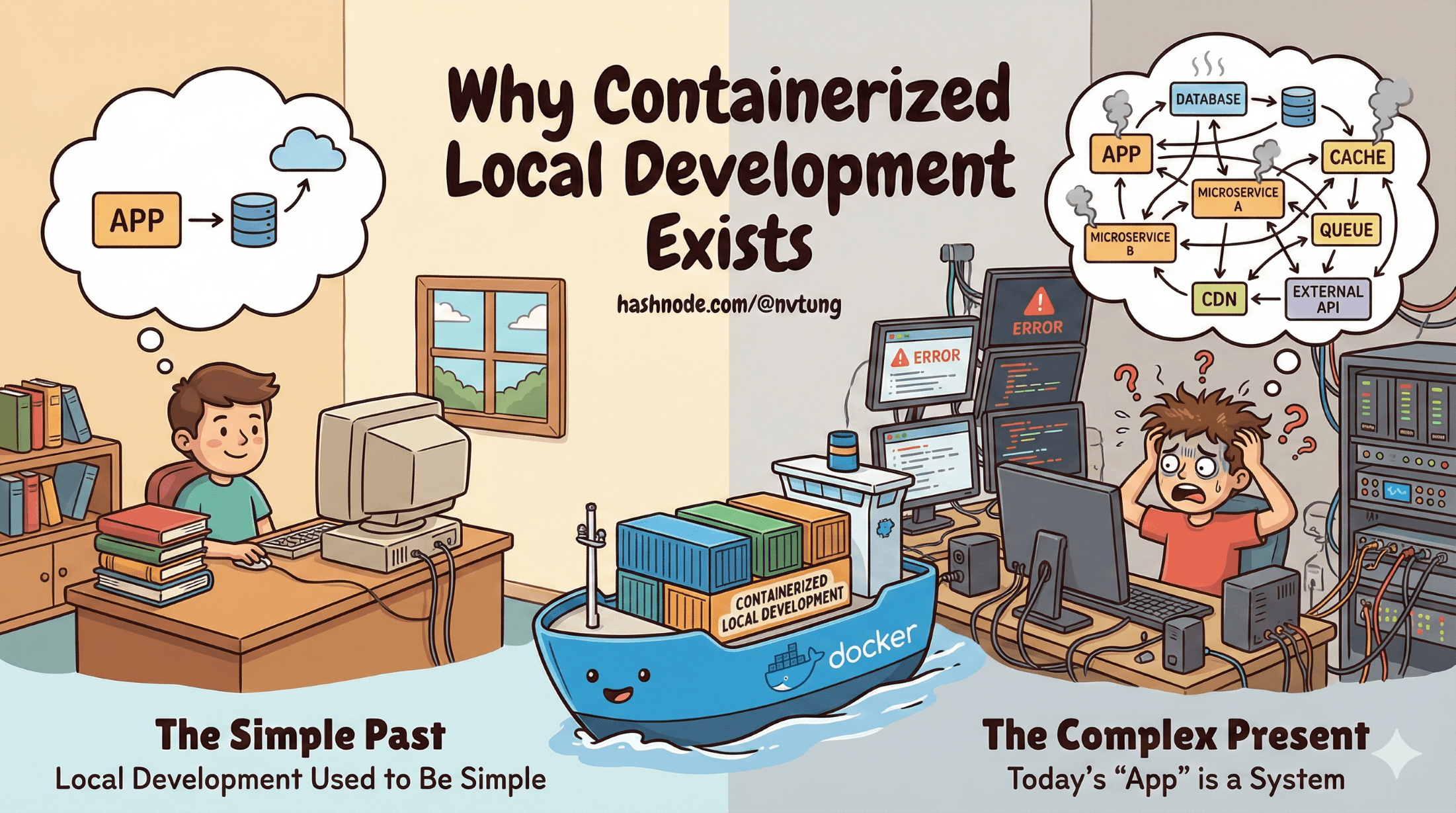

Local development used to be simple.

You installed a language runtime, pulled a repository, ran a server, and got to work. If something broke, the fix was usually local and understandable. The mental model fit comfortably in your head.

That model no longer survives contact with modern web applications.

Today’s “app” is not an app—it’s a system. And systems behave differently. They fail in stranger ways, require more coordination, and punish assumptions that used to be harmless. Containerized local development exists because our old approach collapsed under this weight.

This article explains why.

“Works on My Machine” Is Not a Joke — It’s a Symptom

The phrase “works on my machine” became a meme because it’s painfully familiar. But it’s not really about incompetence or laziness. It’s a signal that the development process itself is structurally flawed.

When two developers pull the same code and get different results, the problem is rarely the code. It’s the environment.

Different OS versions

Different package managers

Different system libraries

Different background services

Different default configurations

Each machine becomes a snowflake. Over time, these differences accumulate until behavior diverges in ways no one intended or documented. Bugs appear that cannot be reproduced elsewhere. Fixes accidentally depend on local state. Debugging turns into archaeology.

This is not a personal failure. It’s environment drift.

Environment Drift: The Slow Death of Consistency

Environment drift happens when environments that are supposed to be equivalent gradually diverge.

It starts small:

One developer installs a newer database version.

Another upgrades Node globally.

Someone adds a system-level dependency and forgets to document it.

Weeks later, the environments behave differently. Months later, no one knows why.

By the time the app reaches staging or production, developers are no longer confident that what they tested locally matches what’s running elsewhere. This gap creates fear, delays, and ritualized debugging.

Containerization exists largely to freeze environments in time, turning them into artifacts instead of accidents.

Modern Full-Stack Apps Are Dependency Graphs, Not Programs

Another reason native local setups break down is that modern apps are no longer single runtimes.

A typical full-stack application today depends on:

An application runtime (Node, PHP, Python, etc.)

A database

A cache

A queue or message broker

A search engine

Background workers

Sometimes observability or admin tools

These components don’t exist in isolation. They form a dependency graph—a network of services that depend on each other’s availability, versions, ports, credentials, and startup order.

Installing these natively means:

Managing multiple versions of the same service

Avoiding port conflicts

Remembering startup sequences

Cleaning up state safely

Explaining the setup to new team members

On Windows, this complexity is amplified. Many services are Linux-first, behave differently, or perform poorly when installed natively. At some point, the local machine stops being a development environment and becomes a fragile experiment.

Containers shift this complexity out of your host system and into a declared, versioned configuration.

Local vs Staging vs Production: The Parity Problem

A recurring failure mode in software teams is environment mismatch:

Local works, staging fails

Staging works, production fails

Production fails in ways no one has ever seen

This usually happens because environments are hand-built differently.

Local might use SQLite while production uses MySQL.

Local might skip caching while production relies on Redis.

Local might use a different web server entirely.

Each shortcut increases divergence. Each divergence increases risk.

Containerized local development aims to reduce this gap—not by making everything identical, but by making differences explicit and intentional.

When the same services run locally, in staging, and in production—using the same images and configurations—the mental model becomes stable. Bugs reproduce. Fixes translate. Confidence increases.

This is called environment parity, and it’s one of the strongest arguments for containers.

Why Virtual Machines Alone Weren’t Enough

Before Docker, many teams tried solving these problems with virtual machines.

VMs helped. They isolated environments and standardized OS-level behavior. But they came with serious drawbacks:

Heavy resource usage

Slow startup times

Poor developer ergonomics

Difficult sharing and versioning

Coarse-grained isolation

A VM is an entire computer. A container is a process with boundaries.

Containers start faster, consume fewer resources, and can be composed together easily. You can run ten services without running ten operating systems. You can version container definitions alongside your code. You can rebuild them deterministically.

VMs solved isolation. Containers solved repeatability and scale.

Native Installs vs Containers: Control vs Entropy

Installing everything natively gives the illusion of control. You can see the files, tweak the configs, and run commands directly. But this control is deceptive.

Native installs accumulate entropy:

Global state

Hidden dependencies

Implicit assumptions

Containers replace that with constraint. You describe what you need, build it, and throw it away when it’s wrong. This disposability is a feature, not a flaw.

If rebuilding your environment is painful, your environment is already broken.

A Gentle Introduction to Immutable Infrastructure

Containerized development borrows an idea from modern infrastructure: immutability.

Instead of modifying environments in place, you:

Define them declaratively

Build them

Replace them when changes are needed

You don’t “fix” a container—you rebuild it.

This mindset reduces debugging surface area and eliminates an entire class of “how did it get into this state?” problems. While local development doesn’t need full production-grade immutability, borrowing this principle dramatically improves reliability.

Reproducibility Is the Real Goal

Docker is often introduced as a convenience tool. That framing undersells it.

The real value of containerized local development is reproducibility:

Anyone can build the same environment

At any time

On any machine

With predictable results

When environments are reproducible, onboarding speeds up, bugs become tractable, and confidence returns to the development process.

Docker doesn’t remove complexity. It contains it.

What Docker Actually Solves (and What It Doesn’t)

Docker does not:

Write better code

Eliminate bugs

Replace understanding

Docker does:

Stabilize environments

Encode assumptions explicitly

Reduce drift

Improve parity across stages

Make complex systems manageable locally

Understanding this distinction is critical. Without it, Docker becomes another opaque tool. With it, Docker becomes infrastructure you can reason about.

Where We Go Next

Now that we’ve established why containerized local development exists, the next step is understanding what we’re actually trying to run.

In the next article, we’ll dissect what a modern full-stack application really consists of—and why treating it as a single process is no longer viable.

Complexity didn’t appear by accident. It arrived because we asked software to do more. Containers are one of the ways we learned to live with that complexity—without letting it live inside our heads.